The Rise of the 'Always On' Workplace and AI Monitoring

Imagine this: you’re finishing up a project at home, and you feel a nagging sense you’re still "at work.’ That’s the reality for a growing number of employees. The line between professional and personal life is blurring, and a lot of that is thanks to the increasing use of artificial intelligence to monitor employees. It"s not just about tracking keystrokes anymore.

Employers are now deploying AI tools to analyze everything from email sentiment and chat logs to facial expressions during video calls. Activity tracking software monitors which applications you use and for how long, while some companies even use AI to assess employee engagement based on communication patterns. This constant surveillance creates an "always on" workplace. Employees are rightly worried about their privacy.

These technologies include things like natural language processing to gauge employee morale, computer vision to monitor work areas, and machine learning algorithms to predict employee turnover. While companies often frame this as a way to improve productivity and efficiency, the potential for misuse and the erosion of trust are significant. We're seeing a clear need for updated regulations to protect workers’ rights in this new era.

California’s New AI Disclosure Law: A First Look

California is leading the charge with Assembly Bill 3312 (AB 3312), which went into effect January 1, 2026. This law requires employers to disclose to applicants and employees if they are using automated decision-making systems in the hiring, performance evaluation, or promotion processes. It’s a landmark piece of legislation, and it’s different than anything we’ve seen before in US employment law.

Specifically, AB 3312 mandates that employers provide a notice to employees before using an automated decision-making system. This notice must detail the types of automated systems used, how they work, and what data is collected. Employers also need to explain how the system makes decisions and how employees can challenge those decisions. The law doesn’t just cover decisions made by AI, but also systems that significantly assist in those decisions.

The stakes are high for non-compliance. According to Ogletree Deakins, penalties for violating AB 3312 can reach $5,000 per violation. More importantly, the California Privacy Protection Agency (CPPA) is empowered to investigate and prosecute violations, meaning companies could face significant legal costs and reputational damage. This law forces employers to be transparent about how AI is influencing employment decisions.

What types of AI trigger the disclosure? AB 3312 defines “automated decision-making system” broadly, encompassing any software or algorithmic tool used to substantially impact or determine employment-related decisions. This includes systems used for recruitment, hiring, performance evaluation, promotion, and even termination. It's a very wide net.

- Disclosure Requirement: Employers must inform applicants and employees about the use of AI in employment decisions.

- Information Provided: Details on how the AI system works, data collected, and decision-making process.

- Challenge Rights: Employees must be informed of their right to challenge AI-driven decisions.

- Penalties: Violations can result in fines of up to $5,000 per violation.

What Does 'Automated Decision-Making' Actually Mean?

The term 'automated decision-making' is central to these new laws, but it’s surprisingly ambiguous. Generally, it refers to decisions made entirely or substantially by an algorithm, without direct human intervention. Concrete examples include using AI to screen resumes, evaluate employee performance reviews, or determine eligibility for promotions.

But what about situations where AI assists a human manager in making a decision? For example, an AI tool might flag certain employees as "high-risk’ for turnover, prompting a manager to have a conversation with them. Is that considered automated decision-making? The law doesn’t offer a clear-cut answer. It seems the key is whether the AI"s input is substantial in influencing the final outcome.

The law is ambiguous here, which will likely trigger legal disputes. Courts will need to grapple with the nuances of AI assistance versus AI control. The intent seems to be to protect employees from decisions made solely by algorithms, particularly when those algorithms are opaque or biased.

CCTV Monitoring Workplace Harassment Case Highlights Employee Privacy Rights

— nomi Blog (@nomiblog) August 31, 2025

A few years ago, I visited a private educational institute in Rawalpindi. A female staff member quietly shared how constant CCTV monitoring was affect https://t.co/IyR6qaRIQ7 pic.twitter.com/OIGS6UuaJZ

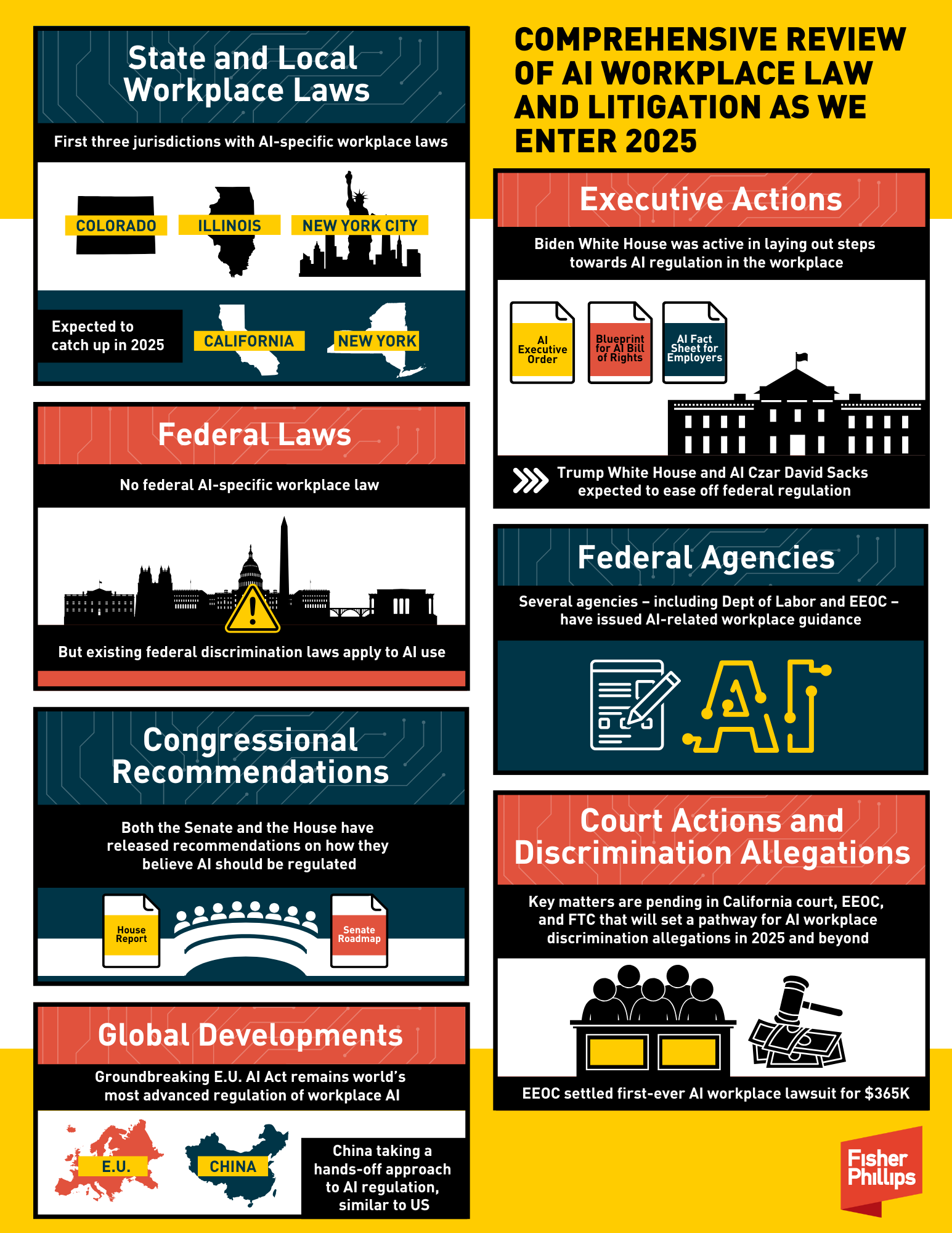

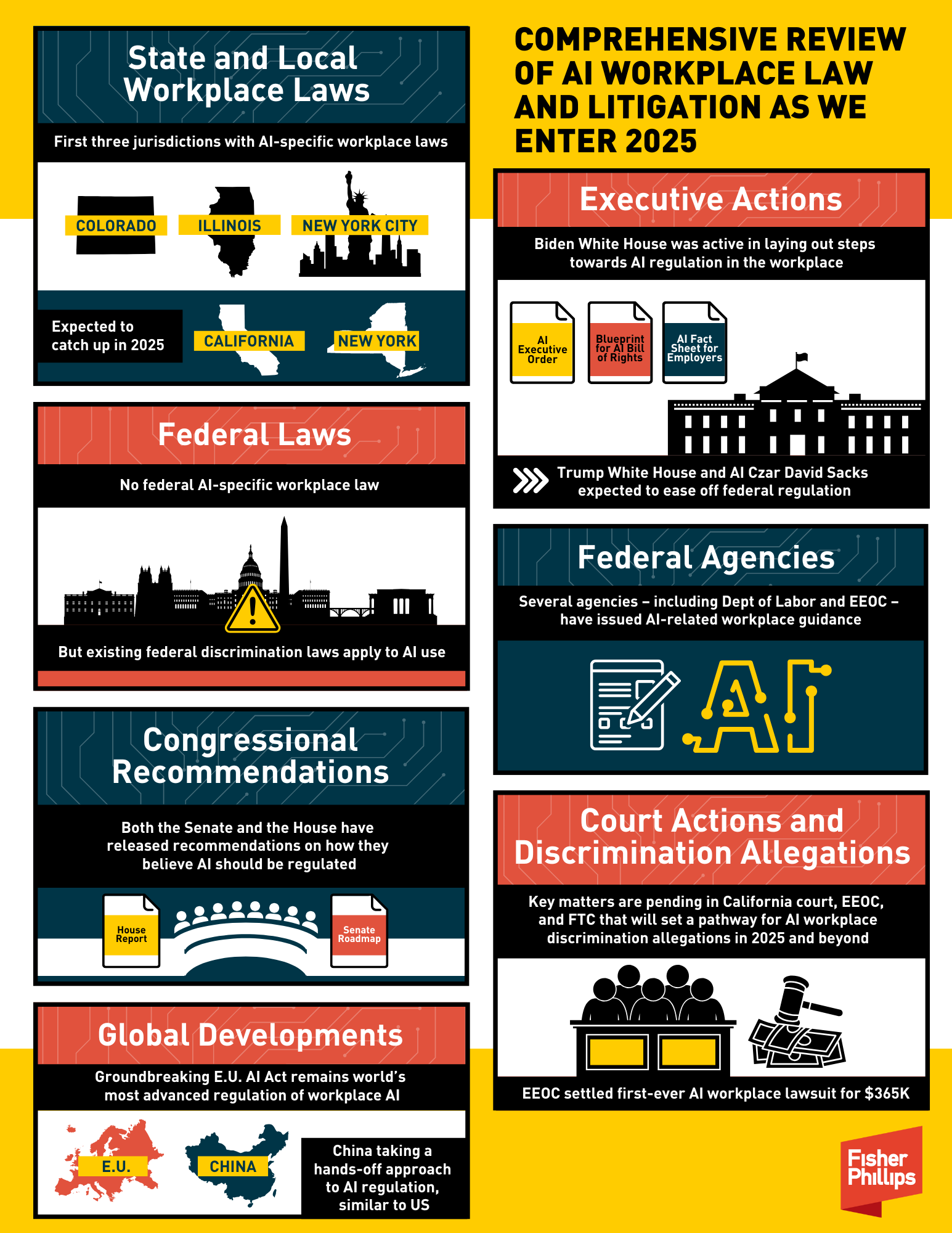

Beyond California: Emerging Legislation in Other States

California isn’t acting in isolation. Several other states are considering similar legislation aimed at regulating AI in the workplace. New York, Illinois, and Washington are among those with bills gaining traction, according to the UC Berkeley Labor Center. These bills are often modeled after California’s AB 3312, but with some key differences.

For instance, some proposals in New York go further by requiring employers to conduct bias audits of their AI systems. This means employers would need to proactively assess whether their AI tools are unfairly discriminating against certain groups of employees. Illinois is focused on protecting biometric data collected through AI-powered surveillance systems. Washington is exploring legislation that would require employers to obtain employee consent before using AI to monitor their work.

The general trend is towards greater employee protection, but the specific approaches vary. There’s a growing recognition that AI poses unique challenges to worker privacy and fairness. While a national standard isn’t yet on the horizon, the momentum is building for more robust regulations.

- New York: Considering bias audits of AI systems.

- Illinois: Focusing on biometric data privacy.

- Washington: Exploring consent requirements for AI monitoring.

State-Level AI Workplace Monitoring Laws (as of late 2024)

| State | Disclosure Requirements | Scope of AI Covered | Employee Rights | Penalties for Non-Compliance |

|---|---|---|---|---|

| California | Employers must disclose to employees if AI is being used to make decisions about their employment, including hiring, promotion, and discipline. | Broadly covers 'automated decision systems,' which includes many AI applications used for workplace monitoring and evaluation. | Employees have the right to know what data is being collected and how it’s being used. They can challenge decisions made by the AI and request a human review. | Potential for significant fines and legal action, particularly if the AI system is found to be discriminatory. Details are still developing with ongoing regulations. |

| New York | Proposed legislation (as of late 2024) requires employers to notify employees if automated employment decision tools are being used. | Focuses on tools used for hiring and promotion decisions. Scope may expand with further legislative action. | Employees would have the right to access information about how the AI tool works and to challenge adverse employment actions. | Penalties could include fines and requirements for remediation, but specifics are still under development. |

| Illinois | The Illinois Artificial Intelligence Video Recording Act (AIVRA) requires notice and consent before using AI-powered video surveillance in the workplace. | Specifically addresses AI used in video recording and biometric data analysis. | Employees must be informed about the purpose of the surveillance and given an opportunity to consent. There are restrictions on the sharing of biometric data. | Violations can result in significant financial penalties and potential lawsuits. |

| Washington | Washington’s law (effective January 1, 2024) requires employers to disclose when AI is used in hiring and to provide an explanation of how the AI works. | Primarily focused on AI used in the hiring process. | Employees have the right to receive information about the AI’s function and how it impacts their application. Employers must conduct bias audits. | Fines for non-compliance, and potential for legal action related to discriminatory practices. |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Your Rights: Challenging AI-Driven Decisions

If you believe an AI-driven decision was unfair or inaccurate, you have the right to challenge it. AB 3312 in California, for example, requires employers to provide employees with an explanation of the decision and an opportunity to review the data used to make it. You can also request a human review of the decision.

The law doesn't necessarily guarantee that your challenge will be successful, but it does give you a voice. Document everything – keep records of your performance, any communication related to the decision, and any evidence you have that supports your claim. You may also consider consulting with an employment attorney to explore your legal options.

Potential legal challenges could center around claims of discrimination, wrongful termination, or breach of contract. The success of these challenges will depend on the specific facts of the case and the interpretation of the law by the courts.

The Impact on Workers’ Compensation Claims

Increased AI monitoring could have a significant impact on workers’ compensation claims. Employers might use AI to scrutinize employee behavior more closely, potentially looking for inconsistencies or evidence that could be used to dispute a claim. This raises concerns about fairness and due process.

For example, AI could analyze video footage from a workplace to determine whether an employee was following safety protocols at the time of an injury. Or it could monitor an employee’s online activity to assess their physical capabilities. It’s also possible that AI could be used to identify potential safety hazards and prevent injuries from occurring in the first place.

However, there’s a risk of bias in AI algorithms used for safety monitoring. If the algorithm is trained on biased data, it could unfairly target certain groups of employees or overlook legitimate safety concerns. Employers must use AI responsibly to avoid perpetuating existing inequalities.

What Employers Need to Do to Prepare

Employers need to take proactive steps to ensure compliance with these new laws. First, conduct a comprehensive AI audit to identify all the ways AI is being used in the workplace. This includes everything from recruitment and hiring to performance evaluation and promotion. Understand what data is being collected, how it’s being used, and who has access to it.

Next, update your privacy policies to reflect your use of AI and inform employees about their rights. Provide clear and concise notices about automated decision-making systems, as required by AB 3312 and similar laws. Train managers on how to handle AI-related issues and how to respond to employee challenges.

It's not just about avoiding penalties; it’s about building trust with employees. Transparency and fairness are essential. By demonstrating a commitment to responsible AI practices, employers can foster a more positive and productive work environment.

Looking Ahead: The Future of AI and Workplace Privacy

These new laws are just the beginning of a much larger conversation about the role of AI in the workplace. It’s likely that California’s law will serve as a model for other states, and we may eventually see a national standard emerge. The federal government may also step in to regulate AI, particularly in areas where state laws are inconsistent.

The key challenge will be balancing the potential benefits of AI – increased productivity, improved efficiency – with the need to protect employee privacy and fairness. It’s a complex issue with no easy answers, and it will require ongoing dialogue between employers, employees, and policymakers. This conversation is far from over.

No comments yet. Be the first to share your thoughts!