AI is watching you work

Sarah, a customer service representative, was shocked to learn her performance wasn't just judged by customer satisfaction scores. Her employer was also tracking her keystrokes – measuring not just how many emails she sent, but how quickly she typed them. A slower typing speed flagged her for "potential disengagement’ and led to an unwanted meeting with HR. This isn’t a futuristic dystopia; it"s happening now.

Companies are adopting AI tools to track keystrokes and emotional states faster than most people realize. This isn't just about productivity; it's a fundamental shift in how much privacy you lose when you clock in.

The technology is becoming cheaper and easier to implement, which means more companies – big and small – are using it. It’s not just about productivity anymore. Some employers are aiming to predict employee turnover, identify potential security risks, or even gauge employee loyalty. Workers need to understand what’s happening now to protect themselves.

Employer Actions Under Scrutiny: Recent Agency Stands

While comprehensive legislation is lagging, US agencies are beginning to take notice of the potential risks posed by AI-driven employee monitoring. The Federal Trade Commission (FTC) has been particularly vocal, signaling a willingness to crack down on companies that use AI in ways that are unfair or deceptive.

According to the IAPP, the FTC has indicated it will scrutinize the accuracy and fairness of AI-based employment decisions. This includes algorithms used for hiring, promotion, and performance evaluation. The agency has the authority to investigate companies that make false or misleading claims about their AI technologies.

This increased scrutiny signals a shift in the regulatory landscape. It suggests that employers can no longer assume they have free rein to monitor their employees without consequence. The FTC’s actions, combined with growing public awareness of privacy concerns, are likely to lead to increased enforcement in the coming years.

Several companies are already facing scrutiny for their AI monitoring practices, though many cases remain confidential. The FTC has been particularly focused on companies that use AI to make automated employment decisions without providing adequate transparency or opportunities for appeal. It’s a developing situation, but the message is clear: employers need to be careful about how they deploy AI in the workplace.

Your Rights: Notice, Consent, and Access to Data

Even in the absence of comprehensive federal laws, employees have some rights when it comes to AI workplace monitoring. The right to be informed about monitoring practices – often referred to as "notice" – is perhaps the most fundamental. Employers should clearly disclose what types of monitoring they are conducting and why.

The issue of consent is more complicated. In many cases, consent is not explicitly required, particularly for monitoring on company-owned devices. However, some states may require explicit consent for certain types of monitoring, such as video or audio recording. What constitutes valid consent is also a matter of debate.

An emerging right is the right to access and correct data collected about you. While not yet universally recognized, some jurisdictions are beginning to grant employees the ability to request information about the data employers have collected and to challenge its accuracy. To request this data, you’ll typically need to submit a written request to your employer.

If your employer refuses to provide access to your data, you may have legal options, depending on your location. You could file a complaint with a state labor agency or consult with an attorney. Documenting all communication with your employer is crucial in these situations.

State Laws Addressing Workplace Monitoring & AI - 2026 Status

| State | Relevant Law | Monitoring Practices Addressed | Notice Requirements | Enforcement |

|---|---|---|---|---|

| California | California Consumer Privacy Act (CCPA) & California Privacy Rights Act (CPRA) | Collection and use of employee personal information, including data generated through AI-powered monitoring of communications, performance, and location. Focus is on transparency and control over personal data. | Employers must provide notice about the categories of personal information collected, the purposes for collection, and employees' rights under the law. Specific notice regarding AI monitoring is not explicitly mandated, but generally falls under 'automated decision-making' provisions. | The California Privacy Protection Agency (CPPA) is the primary enforcement body. Individuals have a private right of action for certain data breaches. |

| New York | New York SHIELD Act | While not specifically focused on AI, the SHIELD Act requires reasonable security measures to protect private information, which could include data collected through workplace monitoring systems. Applies to businesses collecting New York residents’ private information. | Requires implementation of reasonable safeguards. No specific notice requirements relating to employee monitoring are outlined in the law itself. | New York Attorney General is the primary enforcement mechanism. Private right of action is limited. |

| Illinois | Illinois Biometric Information Privacy Act (BIPA) | Specifically regulates the collection, use, and storage of biometric data (fingerprints, facial recognition, etc.). Increasingly relevant as AI-powered monitoring utilizes these technologies. | Requires employers to obtain written consent before collecting biometric data, provide a written release form, and develop a publicly available retention schedule. Detailed notice requirements are central to the law. | Private right of action is available, making BIPA one of the strongest privacy laws in the US. Significant penalties for violations. |

| Connecticut | Connecticut Data Privacy Law | Grants consumers rights over their personal data, including the right to access, correct, and delete it. Applies to controllers processing personal data of Connecticut residents. | Employers must provide a privacy notice outlining data collection practices. The law includes provisions related to automated decision-making, which could apply to AI-driven performance monitoring. | Enforced by the Connecticut Attorney General. No private right of action. |

| Colorado | Colorado Privacy Act (CPA) | Similar to other comprehensive privacy laws, the CPA provides rights to consumers regarding their personal data, including the right to opt-out of certain data processing activities. | Requires businesses to provide a readily accessible privacy notice. The Act includes provisions regarding profiling and automated decision-making. | Enforced by the Colorado Attorney General and the District Attorneys. No private right of action. |

| Montana | Montana Consumer Data Privacy Act (MCDPA) | Provides Montana residents with rights over their personal data, including the right to access, correct, and delete it. Applies to entities doing business in Montana. | Requires businesses to provide a privacy notice outlining data collection practices. Includes provisions related to sensitive data. | Enforced by the Montana Attorney General. No private right of action. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

New laws coming in 2026

The pressure for federal legislation on AI workplace monitoring is building. Several bills have been proposed, aiming to establish clear rules for how employers can use AI to track and evaluate employees. These bills generally focus on requiring transparency, limiting the types of data that can be collected, and providing employees with greater control over their data.

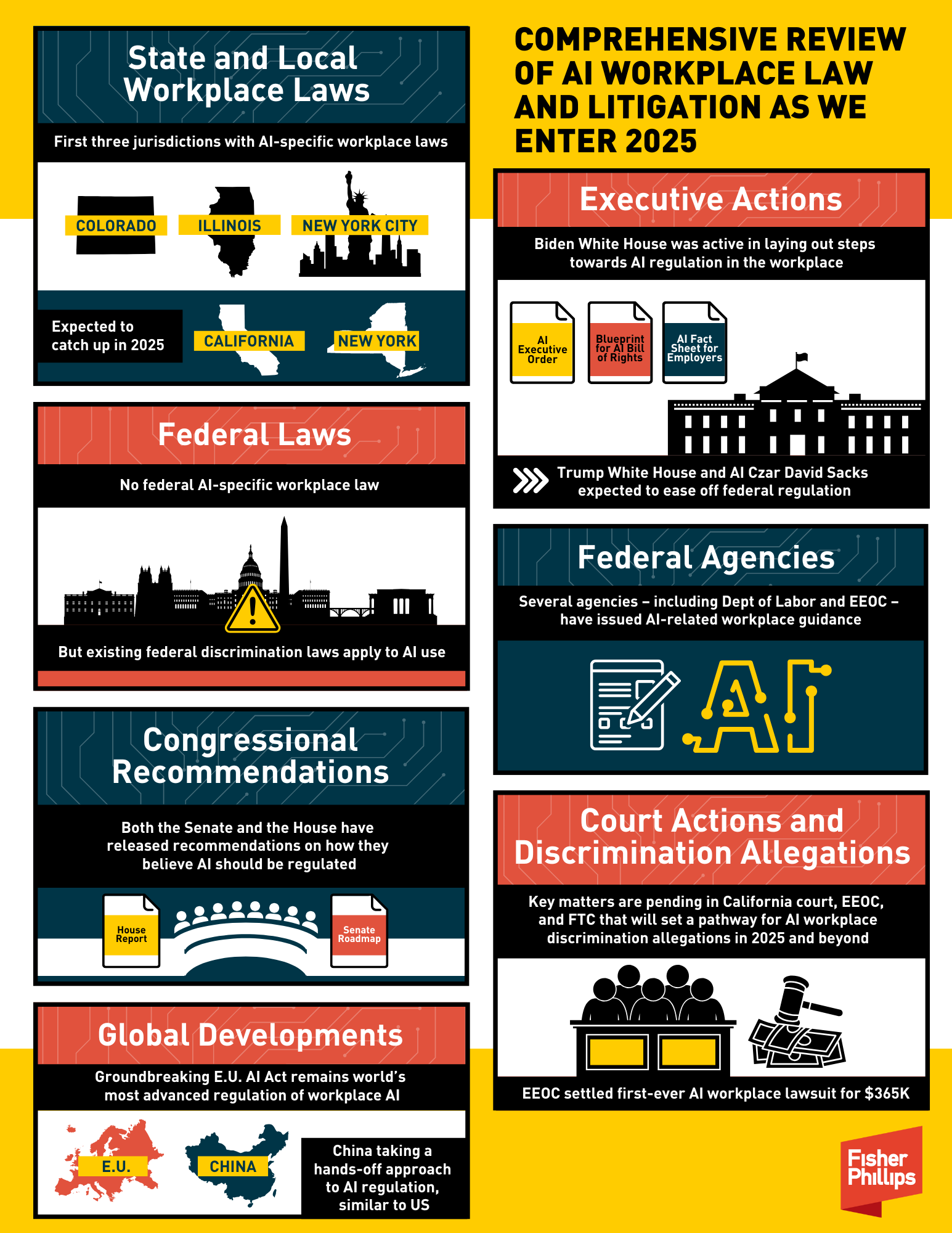

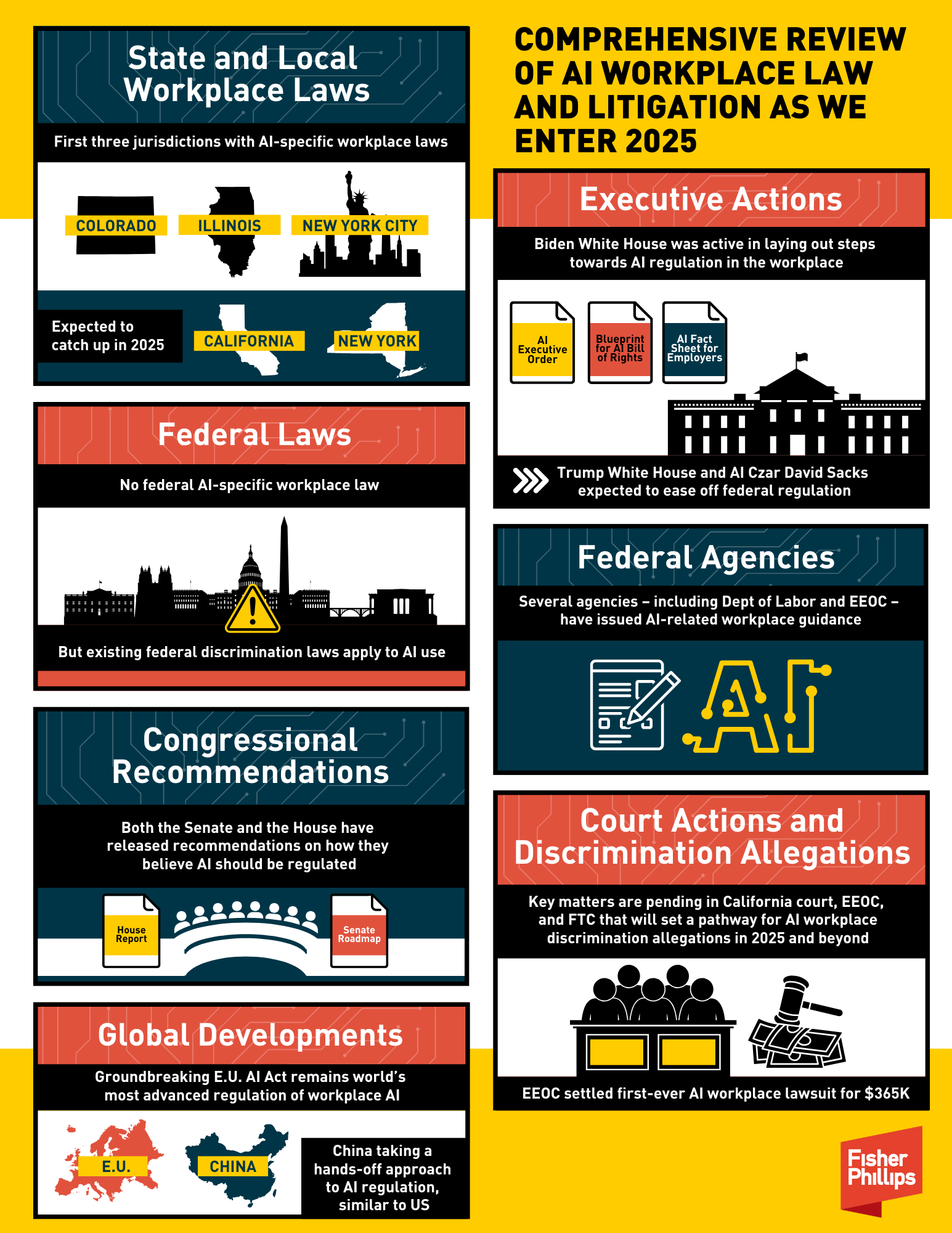

At the state level, several states are also considering legislation. California, New York, and Illinois are among those leading the charge. These state laws could potentially be more stringent than any federal legislation, creating a patchwork of regulations across the country. The impact of these bills could be significant, potentially forcing employers to overhaul their monitoring practices.

Predicting whether these bills will be passed is difficult. Strong opposition from business groups is expected. However, growing public awareness of privacy concerns and increasing scrutiny from regulators could create enough momentum to overcome these obstacles. A realistic assessment suggests that some form of legislation is likely to be enacted within the next few years.

Ongoing legal challenges to AI monitoring practices are also shaping the landscape. Several lawsuits have been filed against companies alleging that their AI monitoring practices violate employee privacy rights. These cases could set important legal precedents that will influence the future of AI workplace monitoring.

- Federal Bills: Focus on transparency, data limitations, and employee control.

- State Legislation: California, New York, and Illinois are leading the way.

- Legal Challenges: Lawsuits are shaping legal precedents.

No comments yet. Be the first to share your thoughts!