The shift to algorithmic surveillance

Workplace monitoring isn’t new. Cameras in warehouses, tracking employee hours – these have been around for decades. But the introduction of artificial intelligence is fundamentally changing how employers monitor their staff. It’s moving far beyond simply tracking time, and into the realm of analyzing behavior, sentiment, and even emotional states.

Employers often justify this increased surveillance by citing benefits like improved security, increased productivity, and protection of company assets. They argue AI can identify potential risks, streamline workflows, and ensure compliance. However, this comes at a cost: a significant erosion of employee privacy and a potential for biased or inaccurate assessments.

Algorithms now judge performance, mood, and potential. Rules around this tech change fast, so you need to know where the legal lines are drawn in 2026.

How AI tracks you

AI employee monitoring encompasses a wide range of tools and techniques, all powered by artificial intelligence. It’s easy to think of it as just tracking how long you spend at your computer, but it’s far more sophisticated than that. These tools aim to analyze what you're doing, how you’re doing it, and even how you feel about it.

Let’s look at some specifics. Communication monitoring involves scanning emails, Slack messages, and Microsoft Teams chats – not just for keywords, but for sentiment. AI can attempt to gauge employee morale or identify potential dissent. Then there’s activity tracking, which goes beyond simple website and application usage. This can include keystroke logging, mouse movement tracking, and even screenshots taken at random intervals.

More invasive still is biometric monitoring. This involves using facial expression analysis to assess engagement levels or voice analysis to detect stress. Finally, performance analytics use AI to generate performance scores based on a variety of data points, potentially leading to biased evaluations. These scores can influence promotions, raises, and even job security. It's a lot, and it’s happening now.

The tech is often flawed. Sentiment analysis relies on keyword matching that misses sarcasm or context. Facial recognition frequently fails, especially for people of color. Treat these algorithmic scores as guesses, not facts.

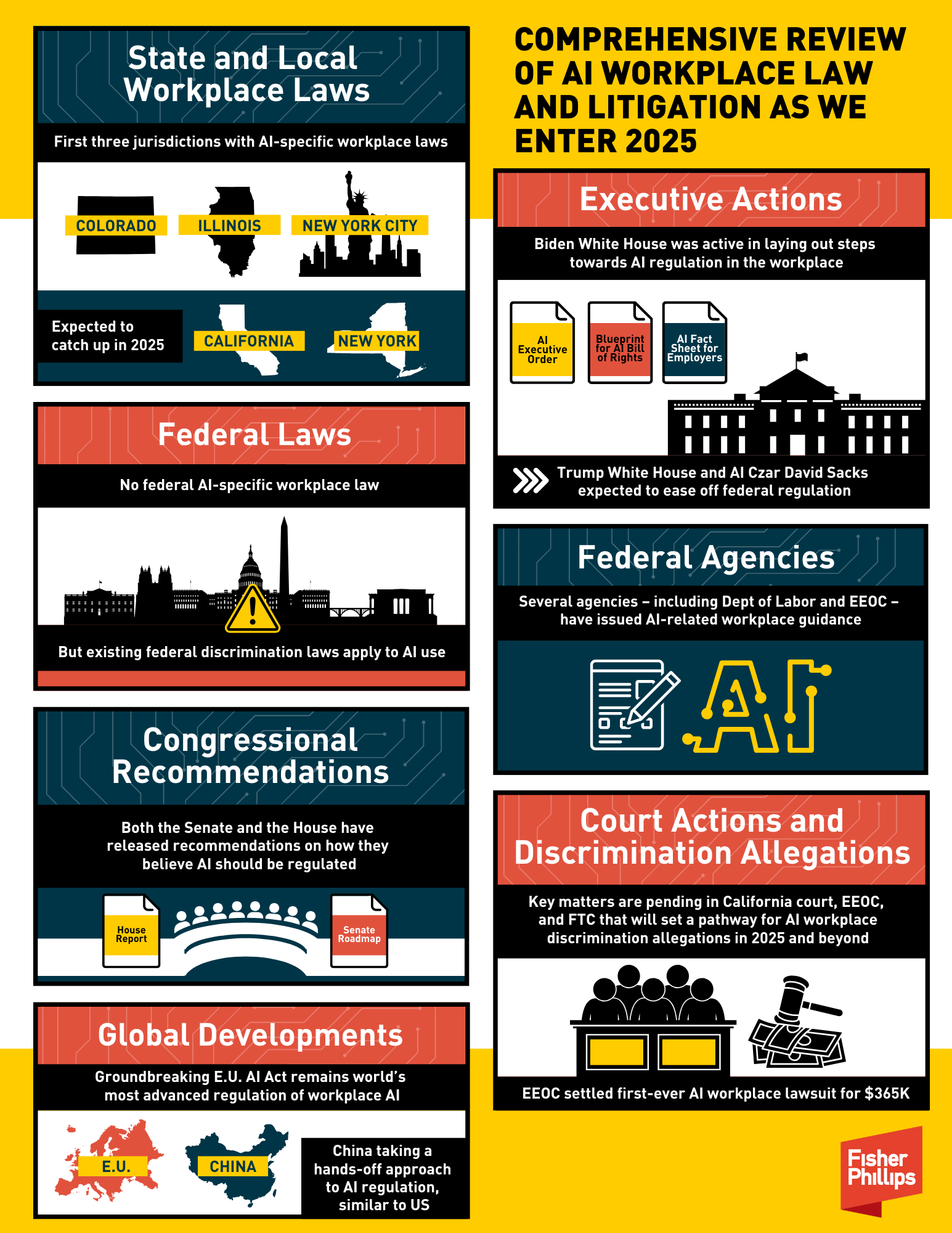

Federal rules are a mess

Currently, there isn't a single, comprehensive federal law in the United States specifically regulating AI workplace monitoring. This leaves many employees with limited legal recourse. However, that doesn't mean existing laws are irrelevant. Several federal statutes could be applied to challenge certain monitoring practices.

The National Labor Relations Act (NLRA) protects employees’ rights to organize and engage in collective bargaining. Monitoring employee communications could potentially violate the NLRA if it’s seen as interfering with these rights. Title VII of the Civil Rights Act prohibits discrimination based on race, religion, sex, and other protected characteristics. AI monitoring tools that perpetuate or amplify existing biases could run afoul of Title VII. The Americans with Disabilities Act (ADA) might be relevant if biometric monitoring reveals information about an employee’s medical condition.

According to a report from the IAPP (International Association of Privacy Professionals) published in October 2024, the FTC and EEOC are beginning to take a more active stance against potentially discriminatory AI practices. They’ve issued guidance and brought enforcement actions related to algorithmic bias, signaling a growing concern about the ethical and legal implications of AI in the workplace. However, clear federal rules are still lacking, creating uncertainty for both employers and employees.

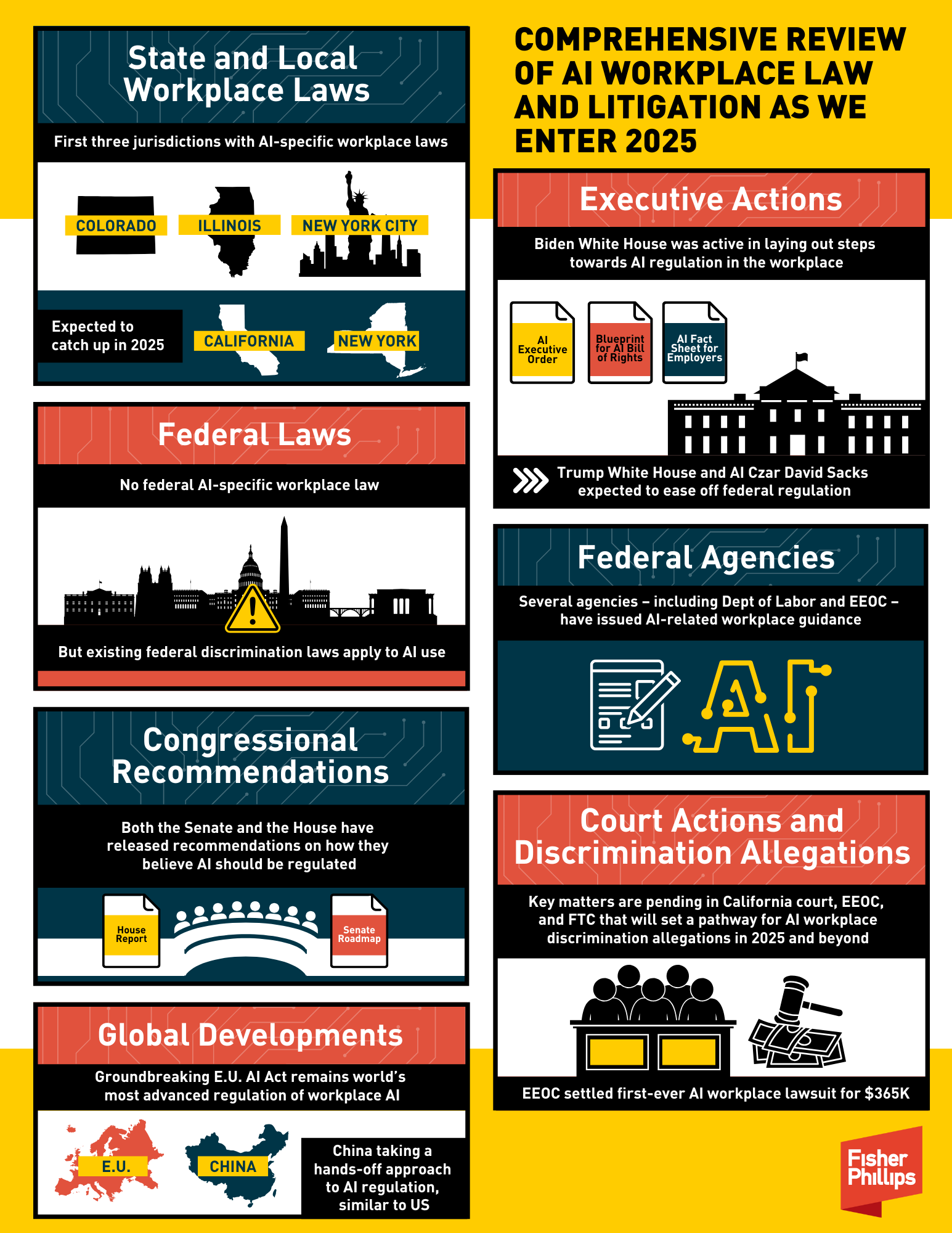

States leading on privacy

In the absence of strong federal regulation, states are taking the lead in protecting employee privacy. Several states have enacted or are considering laws that specifically address employee monitoring, particularly regarding biometric data and data privacy.

California's Consumer Privacy Act (CCPA) and its successor, the California Privacy Rights Act (CPRA), give employees the right to know what personal information is being collected about them and how it’s being used. New York has similar, though less comprehensive, privacy laws. Illinois’ Biometric Information Privacy Act (BIPA) is one of the strictest biometric privacy laws in the country, requiring employers to obtain informed consent before collecting or using biometric data.

Washington state also has strong data privacy laws, and is actively considering legislation to further regulate AI-powered monitoring. Other states, like Maryland and Texas, are also debating similar measures. The trend is clearly towards greater transparency and employee control over their personal data. It's a complex patchwork, and the laws are constantly changing.

Here's a quick look at some key state regulations:

- California (CCPA/CPRA): Broad data privacy rights, including the right to know and delete personal information.

- Illinois (BIPA): Strict regulations on the collection and use of biometric data, requiring informed consent.

- New York: Growing data privacy laws, providing some protections for employee data.

- Washington: Strong data privacy laws with potential for further AI-specific regulation.

State AI Employee Monitoring Laws: A Comparative Overview (2026)

| State | Biometric Data Restrictions | Communication Monitoring Restrictions | Activity Tracking Restrictions | Consent Requirements |

|---|---|---|---|---|

| California | Strong – Requires explicit notice and consent for biometric data collection. CCPA/CPRA provides broad data privacy rights impacting monitoring. | Significant – All-party consent generally required for recording communications. Monitoring employee emails is subject to scrutiny. | Moderate – Activity tracking permissible, but must be demonstrably work-related and transparent. | Generally Required – Clear and conspicuous notice and, in some cases, explicit consent. |

| New York | Developing – No comprehensive biometric law, but existing privacy laws may apply. Increased legislative attention. | Moderate – One-party consent for recording communications is permitted, but monitoring may be restricted based on reasonable expectation of privacy. | Moderate – Activity tracking is generally allowed, but employers should have a legitimate business need. | Often Required – Employers should provide notice of monitoring practices. |

| Illinois | Very Strong – Biometric Information Privacy Act (BIPA) imposes strict requirements for collection, use, and storage of biometric data. Private right of action. | Moderate – Similar to New York, one-party consent is generally permitted, but privacy expectations matter. | Moderate – Tracking is allowed with reasonable justification. | Generally Required – BIPA requires informed written consent for biometric data collection. |

| Washington | Moderate – State privacy act provides some protections regarding sensitive data, potentially impacting biometric monitoring. | Moderate – Similar to NY, one-party consent is generally permitted. | Moderate – Employers should have a legitimate business purpose for tracking. | Notice Recommended – While not always legally mandated, providing notice is considered best practice. |

| Texas | Limited – No comprehensive biometric law. Some specific statutes address certain biometric applications. | Limited – One-party consent for recording communications. Fewer restrictions on email monitoring. | Permissive – Broadly allows activity tracking with limited restrictions. | Limited – No general requirement for consent, but transparency is encouraged. |

| Florida | Limited – No comprehensive biometric law. Limited protections currently exist. | Limited – One-party consent for recording communications. Relatively few restrictions on employee communications monitoring. | Permissive – Allows broad activity tracking with limited restrictions. | Limited – No general requirement for consent. |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Legal limits for bosses

Employers aren't prohibited from monitoring employees altogether, but there are limits. Generally, monitoring practices are considered legal if they are justified by a legitimate business need, and employees are given notice of the monitoring. For example, monitoring customer service interactions to ensure quality is usually acceptable.

However, practices that are overly intrusive or discriminatory are likely to get employers into trouble. Secretly recording employee conversations, monitoring personal devices, or using AI to make biased employment decisions are all risky. Transparency is key. Employers should clearly communicate what data is being collected, how it’s being used, and who has access to it.

Minimizing privacy intrusions is also important. Employers should only collect data that is necessary for the stated business purpose, and they should avoid collecting sensitive personal information unless absolutely necessary. Regularly reviewing and updating monitoring policies is crucial to ensure compliance with evolving laws and best practices. A good rule of thumb: if it feels creepy, it probably is, and it might be illegal.

- Tell employees exactly what is being tracked.

- Do: Justify monitoring with a legitimate business need.

- Do: Minimize the collection of sensitive personal information.

- Don't: Secretly record employee conversations.

- Don't: Monitor personal devices without consent.

- Don't: Use AI to make discriminatory employment decisions.

Your Rights: What to Do If You're Being Monitored

If you suspect you’re being unfairly or illegally monitored at work, you have several options. First, review your company’s employee handbook and any privacy policies. These documents should outline the company’s monitoring practices. If you have questions, ask your HR department for clarification.

If you believe your rights are being violated, you can file a complaint with the relevant state agency. For example, in California, you can file a complaint with the California Privacy Protection Agency (CPPA). You can also consult with an attorney specializing in employment law to discuss your legal options. According to HR.com, understanding your legal and ethical boundaries is crucial in the age of AI employee monitoring.

If you’re a member of a union, you can also organize with your coworkers to address the issue collectively. Collective bargaining can be a powerful tool for protecting employee rights. Remember, you’re not alone. Many employees are facing similar concerns about AI monitoring, and there are resources available to help.

Here are some resources to get you started:

- The EEOC website provides guides on how algorithmic bias violates civil rights laws.

- NLRB:

- IAPP:

- Your State’s Labor Agency: Search online for “[Your State] Department of Labor”

No comments yet. Be the first to share your thoughts!