The reality of workplace surveillance

Amazon warehouse workers already live with constant tracking. Algorithms log their walking speed and bathroom breaks to decide who stays and who gets fired. This isn't a futuristic warning; it is the current standard for thousands of employees. AI monitoring is moving into offices and remote setups, fundamentally changing how we are managed.

We’re seeing a shift beyond simple monitoring of computer activity. While tracking emails or website visits isn't new, AI introduces a level of granularity and automation that’s profoundly different. Tools now include productivity scoring, which assigns a numerical value to employees based on a range of metrics, emotion AI that attempts to read emotional states, and even keystroke monitoring to analyze typing patterns.

These technologies aren’t just about measuring output; they’re about predicting behavior and identifying "risks’ before they materialize. The promise, from an employer"s perspective, is increased efficiency and reduced costs. But the reality, as we're beginning to see, is a workforce under immense pressure and facing serious privacy concerns. It's a fundamental shift in the employer-employee dynamic.

The speed of this adoption is what’s particularly concerning. Businesses are eager to implement these tools, often without fully considering the ethical or legal implications. It’s a bit like the early days of the internet – a rush to innovate without a clear understanding of the long-term consequences. Workers need to be aware of what’s happening and what rights they have.

State laws coming in 2026

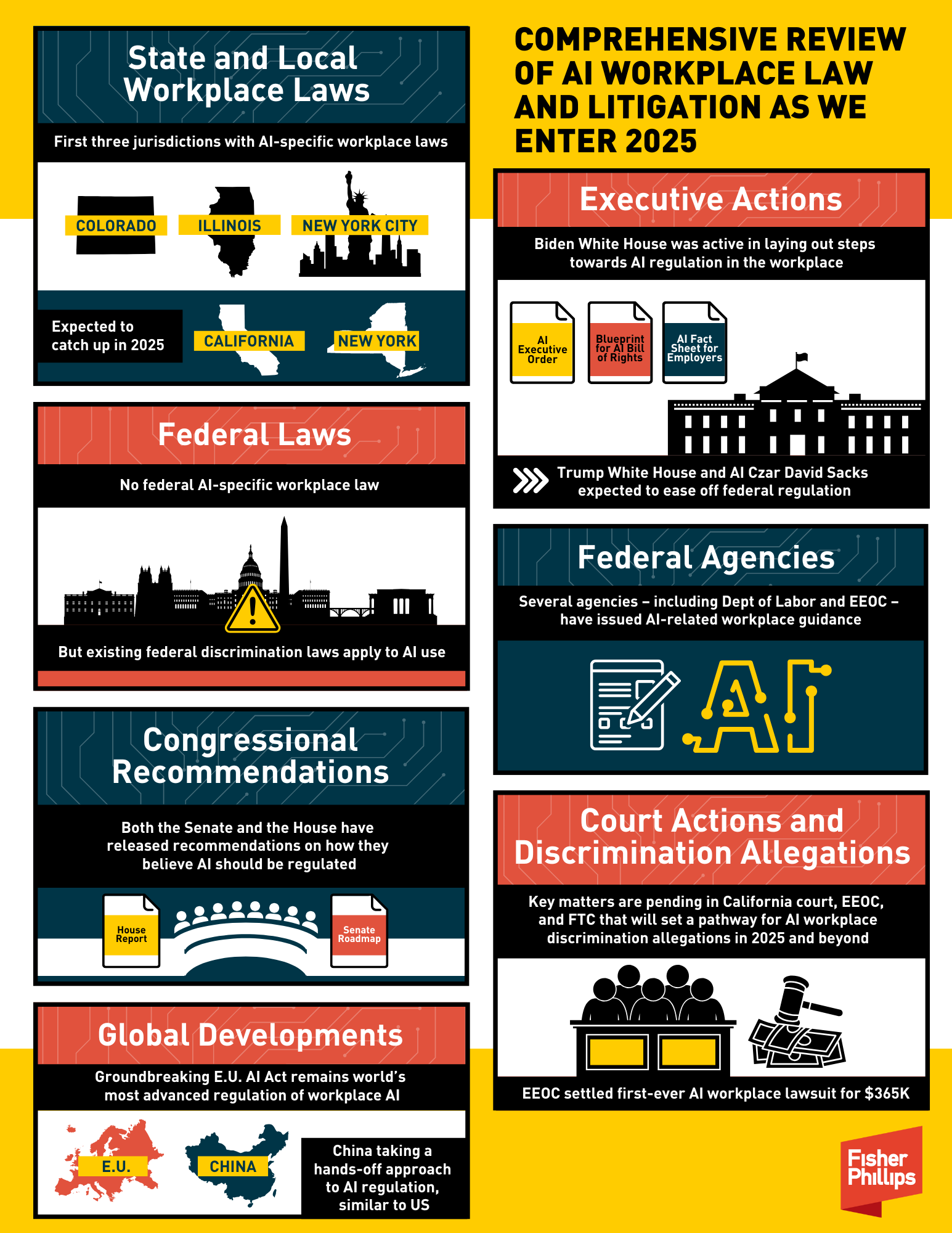

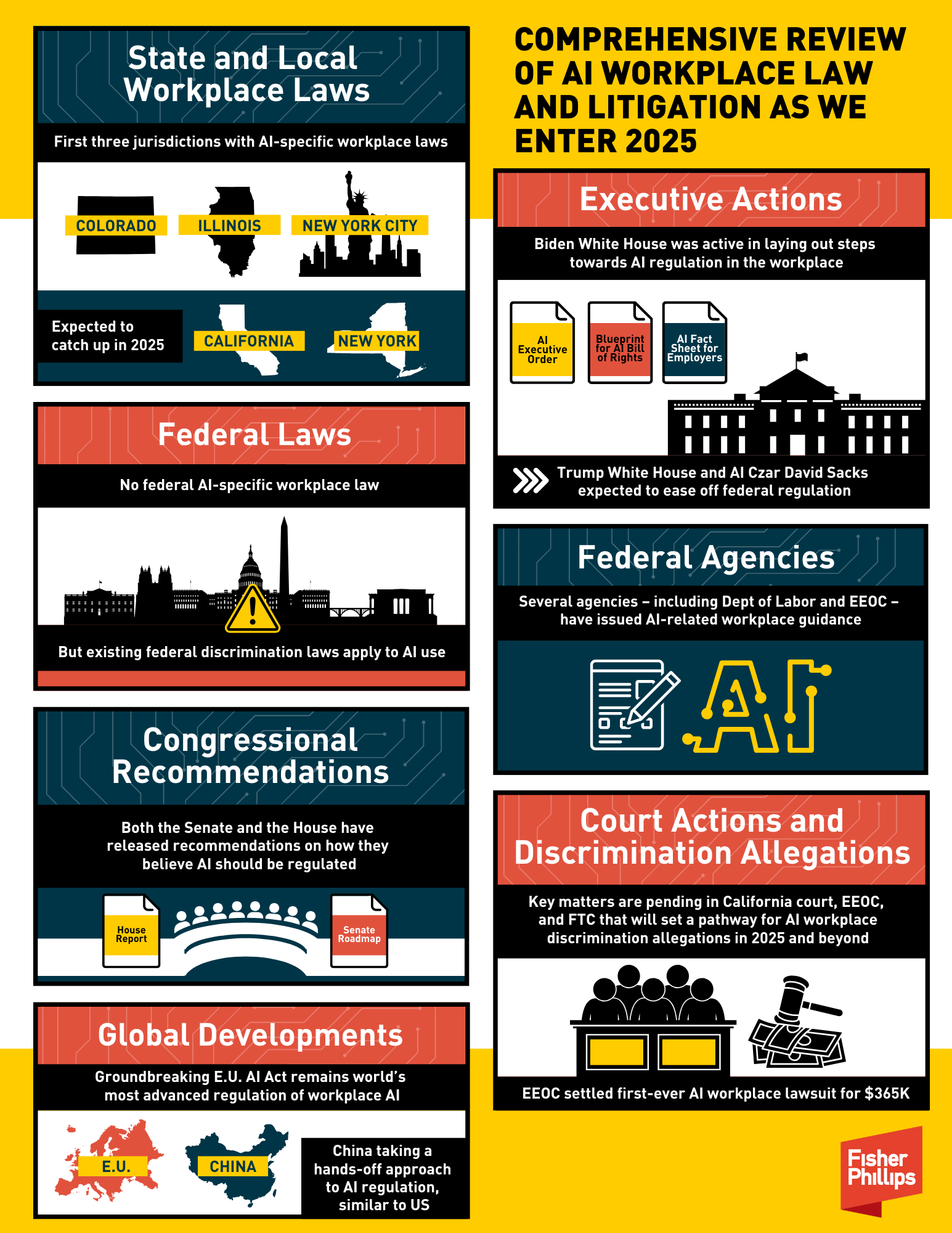

The legal response to AI workplace monitoring is gaining momentum, and 2026 marks a significant turning point. While no single federal law currently governs this area, several states are taking the lead in establishing worker privacy rights. These laws are often complex and vary considerably, so staying informed is essential.

California’s Assembly Bill 2773, effective January 1, 2026, requires employers to disclose to applicants and employees if AI is being used in the hiring process or to make decisions related to their employment. This disclosure must be clear and understandable, outlining the type of AI used and how it impacts decisions. It’s a significant step towards transparency.

New York is also considering legislation similar to California’s, with a focus on automated employment decision tools. A key difference is the potential for a private right of action, allowing employees to sue employers for violations. Illinois, with its existing Biometric Information Privacy Act (BIPA), is already a challenging state for employers utilizing facial recognition or other biometric data for monitoring.

Washington State is focusing on algorithmic accountability, requiring impact assessments for high-risk AI systems used in employment. These assessments must identify and mitigate potential biases. What’s common across these states is a growing awareness that AI isn’t neutral—it can perpetuate and even amplify existing inequalities. The laws are attempting to address that.

These laws aren't just about disclosure. They're also starting to restrict what data can be collected and how it can be used. For example, some states are limiting the use of AI to analyze protected characteristics, such as race or gender. The overarching goal is to protect worker privacy and ensure fair treatment.

State AI Workplace Monitoring Laws (as of Late 2023/Early 2024)

| State | Disclosure Requirements | Data Collection Restrictions | Worker Access Rights | Penalties for Non-Compliance |

|---|---|---|---|---|

| California | Employers must disclose if and how AI is used in decisions related to hiring, promotion, and discipline. (Effective March 2025) | Restrictions on the use of AI to discriminate based on protected characteristics. Focus on algorithmic bias. | Workers have the right to request information about the AI systems used and the data they contain about the worker. | Violations can lead to civil penalties and potential legal action. |

| New York | Employers using 'automated employment decision tools' (AEDTs) must provide notice to candidates and employees. (Effective January 1, 2024) | Requires bias audits of AEDTs annually. Focus on disparate impact. | Workers can request information about how the AEDT works and obtain a summary of the assessment results. | Fines up to $500 per violation, plus potential legal action. |

| Illinois | The Illinois Artificial Intelligence Video Recording Act regulates the use of AI-powered video surveillance in the workplace. | Requires clear and conspicuous notice if AI is used for biometric data collection or video monitoring. | Workers have the right to know when they are being recorded and how the data is being used. | Allows individuals to sue for violations, including unauthorized collection or disclosure of biometric data. |

| Maryland | Requires employers to disclose the use of automated decision-making tools in employment decisions. | Focuses on transparency regarding the types of data collected and the purpose of the tool. | Workers can request an explanation of the decision made by the automated tool. | Penalties include fines and potential legal action. |

| Connecticut | Requires employers to disclose the use of AI in certain employment decisions. | Focuses on algorithmic transparency and fairness. | Workers have the right to access information about the AI systems used in decision-making. | Penalties for non-compliance are still being defined. |

| Washington | Requires employers to provide notice before using automated employment decision systems. | Focuses on preventing discriminatory outcomes. | Employees have the right to request information about the automated system’s decision-making process. | Potential for legal action and damages for violations. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Productivity Scoring: What’s Being Tracked?

Productivity scoring tools are arguably the most widespread form of AI workplace monitoring. They work by collecting data on a vast array of metrics—keystrokes, email response times, meeting attendance, even the length of bathroom breaks, as we’ve seen with Amazon. This data is then fed into an algorithm that assigns a score, ostensibly reflecting an employee’s productivity.

These metrics rarely capture actual value. A fast typist isn't always a productive one. A 2024 CNBC report found that 45% of workers say this constant surveillance ruins their mental health, causing spikes in stress and anxiety.

This system can incentivize presenteeism—showing up and appearing busy—over actual results. Employees may feel pressured to prioritize metrics over meaningful work, leading to burnout and decreased job satisfaction. It creates a culture of surveillance and distrust. The focus shifts from doing good work to looking good on the score sheet.

The potential for bias is also significant. Algorithms are trained on data, and if that data reflects existing biases, the algorithm will perpetuate them. For example, an algorithm might unfairly penalize employees who take more breaks due to medical conditions or caregiving responsibilities. It's a serious concern that needs to be addressed.

- Tracked metrics include keystroke speed, email response times, and how long you spend in specific apps.

- Potential biases: Algorithms trained on biased data can discriminate against certain groups of employees.

- Negative impacts: Increased stress, anxiety, burnout, and decreased job satisfaction.

The rise of emotion AI

Emotion AI, also known as affective computing, attempts to identify employees’ emotional states using facial recognition, voice analysis, and even physiological sensors. The idea is to detect signs of stress, frustration, or disengagement. It’s a deeply unsettling technology with serious privacy and ethical implications.

The accuracy of emotion AI is highly questionable. Studies have shown that these systems are often unreliable and can misinterpret facial expressions and vocal cues. A forced smile or a moment of concentration could be misconstrued as disengagement, leading to unfair evaluations and potential disciplinary action.

The legality of using emotion AI to make employment decisions is also uncertain. Several legal challenges have been filed, arguing that these systems violate privacy laws and discriminate against individuals with mental health conditions. It’s a rapidly evolving legal landscape, and the outcome of these cases could have significant implications.

Beyond the legal concerns, there are fundamental ethical questions. Is it appropriate for employers to monitor and analyze employees’ emotions? Does this create a hostile work environment? This technology treads into very sensitive territory and raises serious concerns about the future of work.

- Companies use facial recognition, voice analysis, and heart rate monitors to guess how you feel.

- Accuracy concerns: Emotion AI systems are often unreliable and prone to misinterpretation.

- Ethical concerns: Invasion of privacy, potential for discrimination, creation of a hostile work environment.

Your Rights: Accessing and Correcting Your Data

As AI monitoring becomes more prevalent, understanding your rights regarding your data is crucial. Several of the new state laws grant employees the right to access data collected about them by AI systems. This means you can request a copy of the information the employer has gathered, including productivity scores, emotion analysis results, and any other data used for evaluation.

The right to correct inaccurate data is also emerging, although it’s not yet universally guaranteed. If you believe the data collected about you is incorrect or biased, you should have the opportunity to challenge it and request a correction. The procedures for making these requests vary by state and employer.

Typically, you’ll need to submit a written request to your employer’s HR department or designated privacy officer. Be specific about the data you’re requesting and the reason for your request. Employers are generally required to respond within a reasonable timeframe, often 30 days. What happens if they refuse?

If your employer refuses to provide access to your data or correct inaccuracies, you may have legal recourse. Depending on the state, you may be able to file a complaint with a regulatory agency or pursue legal action. It's always a good idea to consult with an attorney specializing in employment law.

Legal limits for employers

Employers have a legitimate interest in monitoring employee productivity and ensuring compliance with company policies. However, that interest must be balanced against employees’ privacy rights. Generally, employers can monitor employee activity on company-owned devices and networks, but they must be transparent about their monitoring practices.

What crosses the line? Collecting data on employees’ personal lives, using AI to discriminate against protected groups, and failing to disclose the use of AI monitoring are all potentially illegal. Employers should also avoid using emotion AI to make employment decisions without a clear legal basis.

The key is to implement AI monitoring systems responsibly and ethically. This includes providing clear notice to employees, limiting data collection to what’s necessary, ensuring data security, and providing opportunities for employees to challenge inaccurate data. It’s not just about avoiding legal trouble; it’s about building trust and maintaining a positive work environment.

Employers should also be aware of the potential legal risks of violating worker privacy rights. These risks include lawsuits, regulatory fines, and reputational damage. A proactive approach to compliance is essential. Ignoring these laws isn’t just unethical, it’s bad for business.

Transparency is paramount. Employers should clearly articulate the purpose of any AI monitoring, the types of data being collected, and how that data will be used. A well-defined AI monitoring policy can help mitigate legal risks and foster a more trusting relationship with employees.

Does your employer currently use AI to monitor your work?

As new AI workplace monitoring laws take shape for 2026, we want to hear from you. Understanding how widespread AI monitoring is right now helps us all stay informed about the privacy rights that matter most to workers. Vote below and share your experience!

No comments yet. Be the first to share your thoughts!